Introduction

Technology has evolved and has become part of people’s lives today. Information systems (IS) are now embraced in all spheres of management. Essentially, this is because of its efficiency and reliability in different fields. Knowledge of IS has enabled the control of advanced sectors (Dastmalchian et al., 2020). IS helps distinguish raw and factual data, which are helpful to any firm. A company must always follow a specific protocol while handling these transactions, whether placing orders for a customer or processing many invoices. The two most popular methods are batch and real-time processing. Generally, these procedures allow the organization to organize, save, and previous access transactions. The primary distinction between the two is the way the information is contained. The only difference between real-time processing and batch processing is that real-time processes occur as changes are effected. In the financial industry, there have been attempts to migrate the batch processing of bank statements to near real-time automation using software like Apache Kafka and AWS Kinesis.

Background Information

Real-time data processing is the input and output of data immediately. The framework takes less time and requires a continuous data flow as output occurs immediately after input (Kraetz & Morawski, 2021). When batch processing a sizable static dataset, the result is delivered after the calculations are complete (Gurusamy et al., 2017). Generally, it requires a longer time compared to real-time data processing due to the grouping. Since business circles entail handling data from various sources and for different purposes, batch processing must suit the high data analysis. Systems that handle huge data quantities use batch processing in their operations. The Batch system’s ability to have no user interface makes the application more realistic and widely embraced due to its easy accessibility. Batch processing systems are used in the financial industry in analysis, controlling, compiling organization, and report generation. Generally, its reliability makes it a necessity in business operations today.

Dealing with data has become a key element in today’s business circles. Handlers must learn batch processing to ease the handling of sensitive data (Jawhar et al., 2020). Batch processing systems are part of technology incorporated in today’s business sphere. In most cases, to gather, transfer, preserve, retrieve, modify, and display data, the IS components must cooperate. The IS components interact together to produce data, and the information is complementary to the people and organization in filtering, processing, and distribution of data (O’Connor et al., 2022). The hardware as a component refers to the physical equipment and its associate input and output devices that communicate for a common goal. Generally, the hardware is supported by the software, which refers to the internal programs in a computer.

Batch and real-time approaches are often used in processing transactions in large industries like the financial sector dealing with money, clients and employees. Although the systems that manage this step of the data life cycle can be complicated, the overall objective is to advance comprehension, surface patterns, and acquire insight into intricate interactions (Gurusamy et al., 2017). Transactions are collected over time and processed as a single batch in a batch processing system. For instance, a department might update its sales statistics daily after closing. Alternatively, a payroll system may go through all the time cards every two weeks to calculate employee earnings and generate paychecks. In a batch system, there is always a lag between the actual incident and the computing of the transactions to update the company’s records. In contrast, a real-time processing system processes transactions as they happen, without waiting for them to accumulate. Generally, batch processing may be more productive even if real-time processing is frequently more effective, especially in payroll systems.

An Example of a Batch System

Bank statements are a good form of batch systems in the financial industry. Essentially, it would be unconventional for banks to issue statements after every transaction. Specific information on a bank statement comprises personal details, the period covered, banking details, including the balance at the beginning and end of the time, and any deposits or withdrawals (Marquit, 2022). In its fundamental essence, many transactions of different clients have to be compiled over time to generate a single statement for each customer. The statement has to be made periodically, usually after every month. Single transactions over a period, say monthly, have to be completed and compiled in an organized way according to date and time before being generated.

Batch System Architecture

The batch system functions primarily from three different architectural layouts. Essentially, these components serve as the input, processing, and output. All three are put together to create a seamless batch process together. The dataset is usually persistent, large and bounded (Gurusamy et al., 2017). Essentially, this is because it serves in both the input and the output processes. As information from a queue is obtained, the processor computes the data according to the seller’s requirements. Therefore, the output is not raw data but added data ready for use. The output after processing may be printed out or stored in a database awaiting the next step if required. Additionally, the Batch system is function-oriented and not object-oriented. The reason is that data in it is processed serially and does not need to be obtained in state. Such is seen when printing invoices, financial statements, and issuing renewals for invoices.

Batch processing works effectively for calculations where having access to all records is necessary. For example, datasets must be seen as a whole rather than a collection of individual records for calculating totals and averages. In essence, for the length of the computations, these operations demand that the state be maintained (Gurusamy et al., 2017). A bank statement is intended to demonstrate in detail what transpired with a person’s account over the previous month, including their spending patterns and any expenses made. Most bank statements begin by compiling all deposits, giving customers a clear picture of what was deposited into their accounts throughout the previous month (Marquit, 2022). Clients can then see a summary of the withdrawal activities. The account balance at the start of the period is included in the summary, followed by the outstanding balance at the end of the time after all deposits and withdrawals have been tallied.

The following are some of the basic features of a batch system like bank statement processing architecture:

Apache Hadoop

The first big data platform to experience considerable growth in the open-source community was Hadoop. Hadoop updates the algorithms and element stack to provide accessibility for large-scale batch operations (Gurusamy et al., 2017). They successfully tackle the most common business concerns like fraud, financial crimes, and data breaches. Banks can detect and reduce fraud by analyzing point of sale, authorization, and transactions, among other aspects. The time and resources needed to execute these tasks are significantly reduced thanks to big data, which assists in identifying unexpected trends and informing banks of them. Generally, by serving as an interface to the cluster resources, the software enables running a wider variety of workloads on a Hadoop than was feasible in previous iterations.

Hadoop Distributed File System (HDFS)

The distributed file system component known as HDFS controls retention and synchronization among network nodes. Essentially, HDFS is the storage section of the Hadoop applications.HDFS keeps data accessible despite any inevitably occurring host malfunctions (Gurusamy et al., 2017). Additionally, it is used as the data source to preserve intermediate processing outcomes and the results of the final calculations. In banking, MapReduce processes the data, HDFS archives the data, and Yarn divides the responsibilities.

Advantages of Batch Systems

Using batch processing has a few benefits, including decreased cost being the primary factor. Batch processing has become more affordable due to the reduced frequency of the procedures. For instance, most banks do offer bank statements for free to their clients. Businesses view batch processing as a fixed expense. The total cost for each step would decrease the more batches processed in a given period. The well-proven batch processing approach of Apache Hadoop and its MapReduce computing engine is better suited for handling massive datasets where speed is not a significant consideration (Gurusamy et al., 2017). The final benefit of batch patch processing is that it allows the company to edit the data before it is distributed. Transaction changes can be made without creating additional processes, if necessary.

The Downsides of Batch Systems

Batch processing has a lot of drawbacks in addition to these advantages. One example is the loss of transactions within a larger batch that needs to be handled. In essence, it could be challenging to identify and correct an error if the cluster contains too much data. Think about a business that operates its accounts receivable once every month. When a lot is processed at once, there can be mistakes with some of the bills that are sent out. However, this issue can be resolved by splitting the batch processing into two or even three distinct processes. Overall, batch processing of bank statements is only most effective when a large volume of data needs to be sent out at once.

Grouped data and generated batches are not easy to monitor during the process. According to Gurusamy et al. (2017), this system is generally slow since it depends on permanent storage, reading, and recording for each activity. The inability to watch it means it will take considerable time to debug. The reason is that identifying the mistake traces back to a long chain before it can be identified. Having time to debug is a risk as the information safety through the process is uncertain. Errors in the process come costly. An instance is when a process malfunctions and there is a delay in sorting the issue. The delay means the entire process will have to be held to an unknown period, complicating the process and delaying operations in the organization.

Software Architecture to Move the Batch System to Near Real-Time Processing

In the current business world, and specifically financial sectors, players feel the need to push for the migration from batch processing systems to near real-time processing. Unlike batch systems, real-time processing has a more cost-effective output (Kraetz & Morawski, 2021). The main reason why financial sectors are opting for migration is due to risk management. In real-time processing, the risk is noted, and management strategies are implemented to counter the threat almost immediately. However, more time may be required to sort the batches back to single data in Batch processing to identify the risk and set up management strategies. The process may take some time and delay output as data inflow must be postponed. Convenience being the critical marker of today’s e-commerce platforms, real-time personalization comes in handy. Creating top-tier personalized customer service is what is embraced in today’s world. Modern trends like using cabs require real-time proof to customers of the value for their money. In this case, real-time processing is regarded as an asset in the modern-day business world.

Most of the above-stated challenges are almost non-existent in real-time processing. The minimum downsides of real-time processes are why most organizations, especially financial sectors, are trying to migrate to real-time processing. Computerized bank data extraction software simplifies the underwriting process compared to human data input. The available software architecture to move the batch system to near real-time processing include Kafka and AWS Kinesis.

Kafka

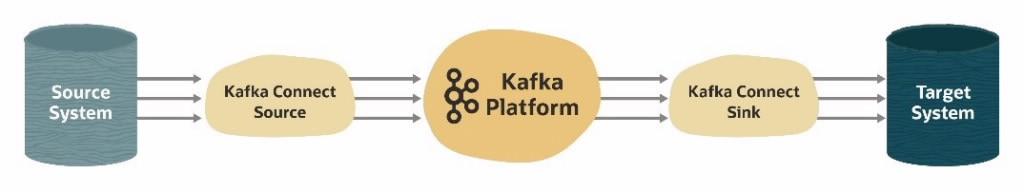

Software developers have developed solutions to migrate from batch process systems to real-time processing. Such is Apache Kafka, that store data and avoid duplicate ETL, as indicated in Figure 1. Decoupling the infrastructure and applications with Kafka is a significant benefit. Essentially, this modern invention seeks to bridge the gap between batch and real-time processing. Additionally, it forms a well-defined data flow system that enhances data handling efficiency. Overall, the process takes a shorter time and is high integrity to the organization.

The Kafka method provides many advantages in addition to eliminating end-of-day dependencies. Essentially, these include the capability to reproduce feeds in the event of a problem with previous information and the avoidance of employing duplicate ETL components for each destination system. Various use cases for Apache Kafka’s deployment of event streaming in the financial sector have evolved. Essentially, this includes big data analytics and mission-critical transactional workloads like processing payments or regulatory reporting.

AWS Kinesis

Amazon Kinesis, better known as AWS Kinesis, is a new software that serves real-time data streaming. Large-scale data input and real-time analysis of streaming data are made possible by AWS Kinesis Streams (Modi, 2021). Essentially, it allows for record ordering and the reading or replaying of records in the same order. The system can briefly handle large chunks of data, thus ensuring efficiency in the data computation process. AWS Kinesis is better has made modern-day consumer interaction easier as it generates a new reaction to new information. Moreover, it gives one the ability to create massive data. They have ensured a seamless transition from traditional to modern, batch, and real-time. Generally, AWS provides the most comprehensive and in-depth selection of machine learning solutions that can be used to improve compliance and verification, automate procedures, enhance consumer experiences, and detect fraud.

In serverless systems, publish-subscribe or pub-sub messaging is a type of asynchronous service-to-service communication. Any communication sent to a subject is immediately received by all of the users in a pub-sub paradigm. Essentially, this enables software components like AWS Kinesis to connect the topic to receive and send messages. On the other hand, in event streams, decisions are made on data as soon as it is produced or broadcast. Generally, this can be made possible by Apache Kafka, eliminating end-to-end dependency and ETL duplication.

Near Real-Time Bank Statement Processing

Bank statements can be automated and retrieved anytime with near real-time architecture. Online transaction processing, or OLTP, is another name for real-time processing. In this instance, the system’s records consistently represent the current situation. Real Time Payments (RTP) typically happen in a matter of seconds. Financial organizations now have software that can generate statements in real-time by integrating with RTP systems. A crucial component of real-time payments is Open-Loop like Credit Cards, which enables the settlement to go straight into the recipient’s bank account rather than relying on a preloaded balance. One can more efficiently keep track of their balance throughout the day with real-time transactions in an open-loop system. However, it can be challenging to reduce payment errors without a coherent and nuanced data-rich strategy. As a result, most banks are now using Apache Kafka to eliminate the end-to-end dependencies on bank officials. AWS Kinesis can ensure the generation of massive data transacted within a short period with simple access to the software. Additionally, they can be used to detect fraud while producing statements.

Conclusion

In brief, banks using the conventional batch processing method are not required to switch immediately to real-time. The transition can be gradual, beginning with procedures that make the most sense for each bank separately, then broadening as advantages become apparent. When data needs to be processed at specific points, batch processing systems are more valuable, while real-time processing is more useful when information needs to flow continuously. The great news is that even with this strategy, banks will still see tangible benefits because real-time access enhances other systems. Generally, the demand for it is already high in the financial sector due to Apache’s widespread use in various cases.

References

Dastmalchian, A., Bacon, N., McNeil, N., Steinke, C., Blyton, P., Satish Kumar, M., Bayraktar, S., Auer-Rizzi, W., Bodla, A. A., Cotton, R., Craig, T., Ertenu, B., Habibi, M., Huang, H. J., İmer, H. P., Isa, C. R., Ismail, A., Jiang, Y., Kabasakal, H., & Varnali, R. (2020). High-performance work systems and organizational performance across societal cultures. Journal of International Business Studies, 51(3), 353-388. Web.

Gurusamy, V., Kannan, S., & Nandhini, K. (2017). The real time big data processing framework advantages and limitations. International Journal of Computer Sciences and Engineering, 5(12), 305-312. Web.

Jawhar, Q., Thakur, K., & Singh, K. J. (2020). Recent advances in handling big data for wireless sensor networks. IEEE Potentials, 39(6), 22-27. Web.

Kraetz, D., & Morawski, M. (2021). Architecture patterns—Batch and real-time capabilities. The Digital Journey of Banking and Insurance, Volume III, 89-104. Web.

Marquit, M. (2022). What is a bank statement? The Balance. Web.

Modi, K. (2021). System Design — Choosing between AWS kinesis and AWS SQS. Medium. Web.

O’Connor, M., Conboy, K., & Dennehy, D. (2022). Time is of the essence: A systematic literature review of temporality in information systems development research. Information Technology and People. Web.

Pattanaik, M., & Umarkar, P. (2021). Is Apache Kafka the next big thing in banking? Oracle. Web.